The Computing Community Consortium (CCC) has released an updated report on quantum computing progress in the past five years, based on a workshop held in the spring 2023. While the CCC report doesn’t break new ground it’s a good overview.

CCC posted a blog this week by Catherine Gill on the report that notes:

“Quantum Computing is in the Noisy Intermediate Scale Quantum (NISQ) era currently, meaning that Quantum Computers are still prone to high error rates and are able to maintain few logical qubits. The work being done in Quantum Error Correction, however, is enabling Quantum Computing to transition towards a Fault-tolerant future. “There has been remarkable progress in quantum computer hardware in the last five years”, says Kenneth Brown, Professor of Engineering at Duke University, “but challenges remain in terms of reducing errors and scaling systems. We thought it was critical to bring together experts in quantum computing, computer architecture, and systems engineering to plan for the next ten years.”

The workshop and subsequent report focused on five areas:

- Technologies and Architectures with a View Towards Scaling. Scalable architectures demand that larger systems yield lower computational error and decreased per qubit costs, and reaching practical quantum computation will require creativity and cross-discipline collaboration within academia and industry to produce technological innovation that permeates the quantum compute stack. Further, improved models that are faithful to the dynamics of actual systems will help push progress forward by defining the practical constraints we must consider when making theoretical quantum systems a reality.

- Applications and Algorithms. There is a clear need for more applications and algorithms with practical quantum advantage. This requires both producing near-term applications with demonstrated experimental advantage and continuing to develop keystone applications which have strong theoretical evidence of advantage. To facilitate these goals, we recommend reducing resource requirements of keystone applications, exploring near-term applications via domain integration, and benchmarking hardware to enable algorithm development.

- Fault Tolerance and Error Mitigation. QCs are limited by noise. In the near-term, error mitigation will reduce application noise and quantum error correction (QEC) demonstrations will inform future QC design. Large scale quantum computation will require error correction and fault tolerance. Current developments in QEC codes present opportunities for co-design of quantum architectures. Systems that combine fault- tolerant principles and error-mitigation methods can serve as a bridge between current systems and future large-scale QCs.

- Hybrid Quantum-Classical Systems: Architectures, Resource Management, and Security Quantum hardware will likely be advantageous on specialized computations, and the solution of most practical problems will require a hybrid solution with substantial classical computation in cooperation with a quantum kernel. The organization of these hybrid systems and the hybrid algorithms that run on them will be key areas of research. Classical computation for quantum circuit optimization, simulation, and verification will also be key enablers. Finally, an emerging concern is the secure design of quantum systems in the face of potential vulnerabilities.

- Tools and Programming Languages. The tools for quantum programming are still relatively new. Quantum programming today requires a deep knowledge of unitary mathematics and its associated linear algebra. Even with this knowledge, well-known algorithms are non-intuitive to newcomers, and, new algorithms are difficult to reason about even for quantum experts. To welcome newcomers to the field, to facilitate research, and to permit scaling up to programs with quantum advantage, efficient high-level quantum programming abstractions are needed. To realize such abstractions, software engineering infrastructure is needed for compilation, verification, and simulation, both for near-term and long-term hardware.

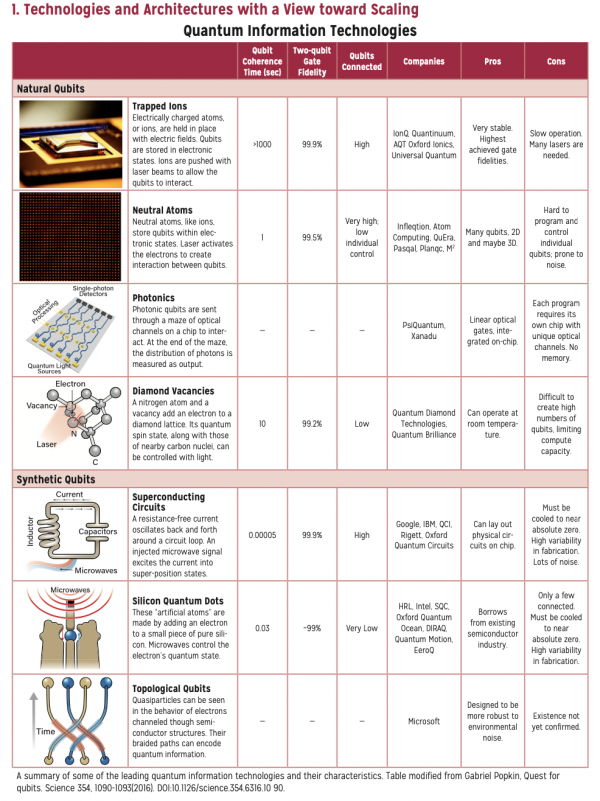

The infographic list of qubit types below is a nice primer. It’s necessarily incomplete as the number of qubit types seems to grow daily.

The report concludes “Quantum computing is at a historic and pivotal time, with substantial engineering progress in the past 5 years and a transition to fault-tolerant systems in the next 5 years. Taking stock of what we have learned from NISQ systems, this report examined 5 key areas in which computer scientists have an important role in exploring.”

Among the report’s interesting findings is a recommendation to standardize QC benchmarking. “We recommend exploring standardized benchmarking frameworks to identify a set of benchmarks which would enable us to evaluate quantum platforms, algorithms, and potential domain problems. For example, an end-to-end quantum machine learning benchmark would allow us to evaluate not only the general performance of a quantum device, but also the algorithm’s noise resilience and data sensitivity. More work on widely accepted benchmarks with input from other communities (computer scientists, machine-learning communities) may also lead to increased collaboration and interest from other domain experts.”

(CCC is the NSF-created entity in 2007 – “The CCC operates as a programmatic committee of CRA under CRA’s bylaws: its membership only slightly overlaps the CRA’s Board of Directors; it has significant autonomy; and it has a great deal of synergistic mutual benefit with CRA. The CCC Council meets three times every calendar year, including at least one meeting in Washington, D.C., and has biweekly conference calls between these meetings. Also, the CCC leadership has biweekly conference calls with the leadership of NSF’s Directorate for Computer and Information Science and Engineering (CISE).”)

Link to CCC blog, https://cccblog.org/2024/01/25/ccc-releases-the-5-year-update-to-the-next-steps-in-quantum-computing-workshop-report/

Link to CCC report, https://cccblog.org/wp-content/uploads/2024/01/5-Year-Update-to-the-Next-Steps-in-Quantum-Computing.pdf

*The report authors include: Kenneth Brown, Duke University Fred Chong, University of Chicago Kaitlin N. Smith, Northwestern University and Infleqtion Thomas M. Conte, Georgia Institute of Technology and Community Computing Consortium Austin Adams, Georgia Institute of Technology Aniket Dalvi, Duke University Christopher Kang, University of Chicago Josh Viszlai, University of Chicago